Responsible AI, Trusted By Global Enterprises & Higher Ed Experts

In education, trust is not a feature, it is the foundation. That’s why Responsible AI is embedded into every layer of our platform, from system design to user experience to reporting and insights.

Our approach is grounded in a simple principle: ethical design drives better student success. When systems are transparent, secure, and accountable, institutions can confidently scale personalized guidance while staying focused on what matters most.

Our Responsible AI team includes senior engineering leaders, Fortune 500 AI practitioners, data privacy experts, and educators across public and private institutions.

Supporting Thousands of Advising Conversations Each Month | Trusted by Leading Institutions & Teams Worldwide | 98% Ethical AI Satisfaction Score | Informed by 100+ Research Experiments

.png?width=2000&height=1049&name=image%20(6).png)

DATA SECURITY & INTEGRITY

Built to safeguard sensitive data across the full student lifecycle.

- Role-based access controls: Granular permissions ensure only the right individuals access the right data at the right time.

- Secure infrastructure: Enterprise-grade authentication and encryption protect data across integrations and real-time usage.

- Institution-level data isolation: Each partner environment is securely separated to ensure data integrity and confidentiality.

- Backup, recovery & uptime resilience: Redundant systems and failover protocols ensure continuity of operations and minimize disruptions.

HUMAN CONNECTION & TRUST

AI that strengthens advising relationships at scale.

- Human-in-the-loop design: Advisors remain central to key decisions, with visibility into platform recommendations and student engagement.

- Proactive engagement tools: Integrated communication enables timely outreach at key student milestones, without added workload for staff.

- Accessibility: Students are guided toward human support when it matters, during moments of uncertainty or navigating key resources.

- Trust-centered experience: Students understand when they are interacting with AI and how it complements human support.

How Higher Education Institutions Are Setting The Stage for Responsible AI

In this thought leadership piece, Arjun Arora, Founder of Advisor AI, examines why AI project failures have become routine and what leading institutions and teams are doing differently. From chatbots going off-script to rushed deployments that erode trust, the headlines are familiar. Yet behind the noise, a quieter reality is emerging: organizations that take a disciplined, intentional approach to AI are getting it right.

TRANSPARENCY & EXPLAINABILITY

Assistance that users can trust, understand, and review.

- Explainable recommendations: Each output includes clear reasoning tied to academic pathways, career outcomes, or institutional resources.

- Model transparency: Documentation and institutional FAQs provide clarity into how systems are evaluated and improved over time.

- Data tracking & auditability: Institutions can review and trace recommendations for compliance and continuous improvement.

RISK MANAGEMENT & GOVERNANCE

Enterprise-grade controls for safe, reliable deployment.

- Defined governance frameworks: Clear policies guide AI development, deployment, and ongoing monitoring across each institution.

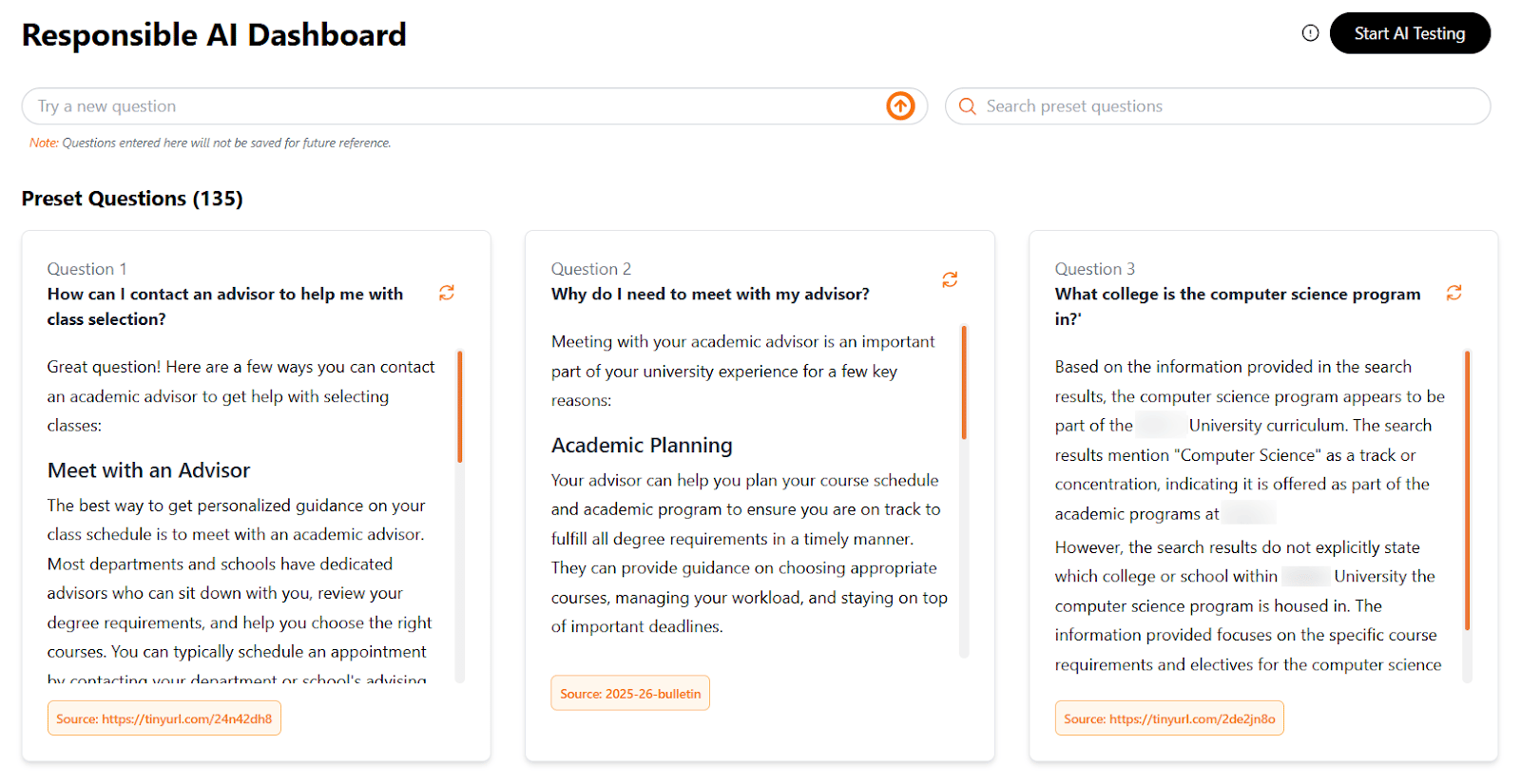

- Continuous monitoring: Easy-to-use dashboards enable ongoing tracking of system quality, usage, and engagement over time.

- Compliance-ready design: Built to align with evolving regulatory and institutional standards for responsible and ethical use of technology.

.png?width=2000&height=1056&name=image%20(7).png)

.png?width=2000&height=1056&name=image%20(11).png)

BIAS & FAIRNESS TESTING

Ensuring equitable outcomes across all student populations.

- Pre-deployment evaluation: Models are tested across diverse student demographics, academic histories, and career pathways.

- Fairness benchmarking: Standardized metrics help ensure recommendations are equitable and inclusive across groups.

- Ongoing model refinement: Continuous updates incorporate institutional feedback, feedback data, and emerging best practices.

COMPREHENSIVE GUARDRAILS

Proactive systems to ensure safe, reliable, and accurate AI.

- Pre-launch stress testing: Simulations identify edge cases and potential failure scenarios before deployment.

- Content safety: Filters and safeguards prevent harmful, misleading, or inappropriate outputs.

- Confidence-based fallbacks: When certainty is low, the system defers to safer responses or custom human intervention notes.

Empowering 20,000+ Advising Professionals Globally With "Ask NACE"

“Ask NACE” is a first-of-its-kind chatbot trained exclusively on decades of trusted research and resources from the National Association of Colleges and Employers. Serving more than 20,000 advising and recruiting professionals across 2,000+ colleges and businesses, it makes it easier for teams to find answers, explore ideas, and act on insights.

MINIMIZING ENVIRONMENTAL IMPACT

Efficient AI designed for purposeful engagement, not addiction.

- Low-frequency, high-impact engagement: Designed for intentional use during key student decisions, not constant interaction.

- Energy-efficient infrastructure: Optimized systems reduce computational load and energy consumption significantly.

- Reduced noise: No engagement-maximizing patterns such as infinite scroll or unnecessary notifications.

Modular System, Easy To Launch

Once institutional needs and strategic objectives are identified, the Advisor AI team develops a collaborative action plan for launching the system and tailors it with university-specific information, such as course catalogs, degree milestones, and resource FAQs—all within a four-week timeframe. Based on the strategic value of use cases and student engagement results, additional integrations are discussed.

The onboarding process is simple: University representatives add the app link to their website while Advisor AI works with IT to establish user authentication and app enrollment. The platform's user-friendly, intuitive nature typically requires only 10 to 15 minutes of training for students and advisors, enabling institutions to transform the student experience and demonstrate institutional effectiveness from day one.

Finding the Right Balance Outside the Classroom: Navigating AI Ethics While Preserving the Human Touch in Advising and Student Support

Featured Resources

Frequently Asked Questions

Why is Advisor AI viewed as a leader in responsible AI adoption in education?

Advisor AI treats ethics as a design principle, not a feature. The platform is intentionally built to support and extend the work of human advisors—not replace them—reinforcing accountability, trust, and student-centered decision-making at scale.

What this really means: It’s like having a really smart GPS that helps you plan your route and explore options - but you’re still the one driving the car.

How does Advisor AI protect institutional and user data?

Advisor.AI uses a system architecture that separates data and model environments for training and testing. This approach is designed to restrict institutional data from being exposed to external models or unauthorized access.

What this really means: Unlike many edtech companies that rely on shared environments, institutional data is treated like a sealed document placed in a private archive—protected within its own vault and housed in an environment reserved solely for each institution.

Does Advisor AI make autonomous decisions?

No. Advisor AI neither approves nor takes action independently. All decisions remain under human control and aligned with institutional policies and governance.

What this really means: It can suggest options, but a real person always makes the final call—just like a teacher reviewing your work before it’s graded.

How does Advisor AI address bias and fairness?

The platform incorporates built-in bias and fairness guardrails. Models are trained on anonymized, representative datasets and continuously monitored to identify and mitigate risks.

What this really means: It’s designed to treat every student or administrative user fairly, not just the loudest, fastest, or most confident ones.

How does the platform avoid over-reliance on AI?

Advisor AI avoids engagement-driven or addictive design patterns. Guidance is delivered in short, purposeful steps, and students are encouraged to consult advisors for high-stakes decisions—supporting informed use rather than dependence.

What this really means: It gives helpful nudges, not constant notifications or pressure to stay glued to the screen.

Why are Advisor AI’s recommendations explainable and trustworthy?

The recommendation includes a clear rationale grounded in academic catalogs, career pathways, and/ or institutional data. This transparency supports trust, adoption, and responsible decision-making. Feedback loops help support continuous improvement and feedback cycles.

What this really means: It shows its work—so you know why it’s suggesting something, not just what it’s suggesting.